I think that in the future, Brain-Computer Interfaces (BCI) will be absolutely awesome. As much as the thought of using them is attractive, I think they also might be dangerous for ourselves if misused.

We can expect to see the emergence of awesome speeds of communication, new apps and tools, shareable mental models and knowledge bases, deep learning projects that will enable the creation of those apps, misuses of the BCI, a need for more open-source projects, and changes in the type of intelligence that humans will need to develop in the future. In this following article, I’ll elaborate on those points.

Over the years, we’ve seen technology getting closer and closer to our bodies and minds. From television preceded personal computers, personal computers preceded smartphones, smartphones preceded virtual reality headsets, virtual reality headset preceded non-invasive BCIs (such as EMOTIV). And non-invasive BCIs preceded invasive BCIs. For some of those technologies, mass public adoption remains something that we’ll see over the next decades. Anyway, let’s look to the future.

Awesome Speeds of Communication.

Imagine for a second being able to communicate super efficiently with something even better than language: raw thoughts, transferred instantly. Wouldn’t that be awesome? That would open up all sorts of possible new productivity and leisure applications.

Basically, everything that requires mental work related to an exchange of information between your head and something else would be accelerated.

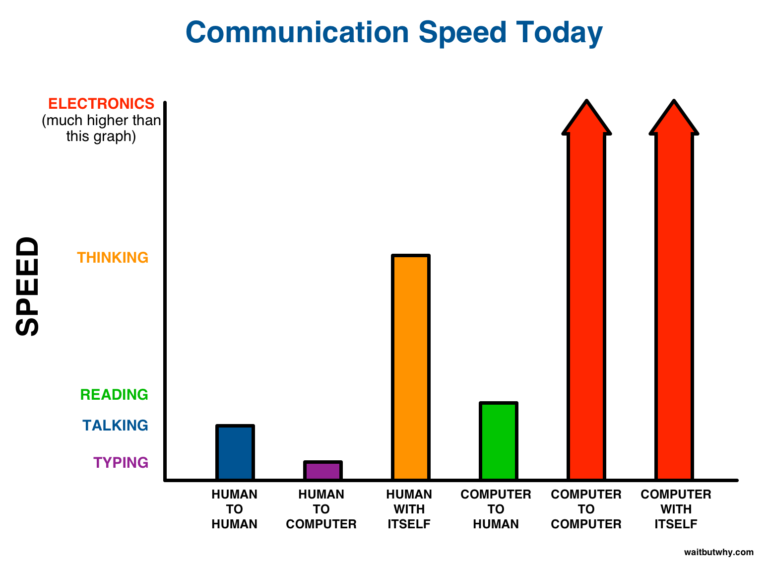

I think the following images from this very awesome blog post picture the point pretty well. Shortly said: communications speed could be brought to insane telepathic levels.

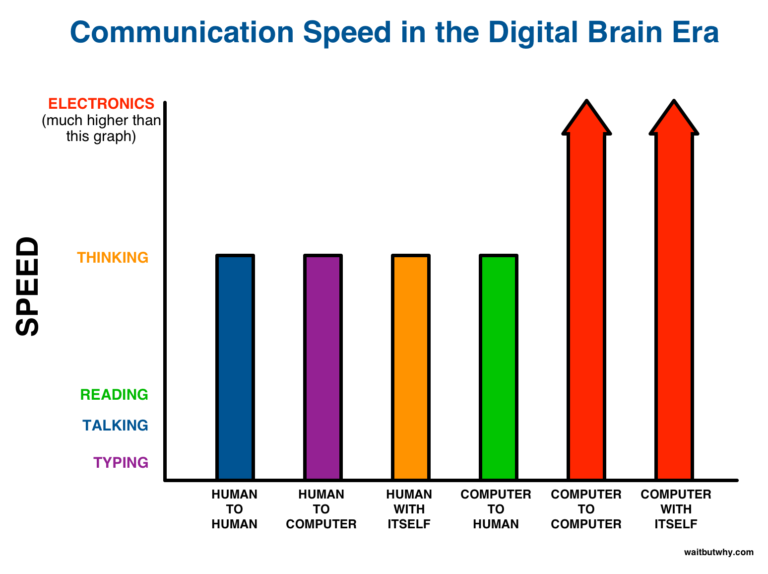

And with the BCI:

The Emergence of New tools.

So, the BCI would augment our communication capabilities with people and with our environment (e.g.: telepathic keyboard). But that isn’t limited to keyboards…

Imagine a music app able to recommend you the best music for you according to your current brain activity. That would totally nail it. Or imagine being able to control some sort of physical body (remote or virtual) from your thoughts, as if it was yours.

Developing new senses, such as detecting electromagnetic variations and having more inputs to your brain when required to operate and control in some situations.

Shareable Mental Models and Knowledge Bases

“Spawn new neurons for your brain in the cloud now”. I can’t wait to see this advertisement – I hope we’ll get there (although I’d prefer to have those neurons on-premise, saved locally near me if it’s not too much more complicated).

You could interact with extra brain matter in the cloud or elsewhere via your BCI. Download pretrained neural networks containing knowledge available to everyone, a bit like a neural version of Wikipedia. Sharing with others some of your own external mental models would be possible.

I do tons of Google searches every day. Imagine being able to query the web with thoughts, and to get results as thoughts as if you already know what you look for. Accessing the right information at the right time should only get easier over time.

But this may also be dangerous.

Thinking of adversarial neural networks and adversarial attacks, it’d be possible to question ourselves on the security of such a BCI device.

For example: an app which’s only goal is to reverse-engineer what makes you happy and drug you on that. If pushed to the extreme, I think that could be like an addiction, and some people are bad at managing that.

I’ve also had discussions with persons saying that they’d like to disable fear in their brain. I think that would be extremely dangerous and could lead to hazardous conduct. Personally, I would like to continue to feel fear to avoid threats: I’d never turn fear off.

Hopefully, a brain implant shouldn’t be given such a great power on the brain so that we couldn’t mess things up this much. Well, I hope.

Thoughts on open-source software.

I believe that the best operating system of the BCI will need to be open-source, like Linux, to ensure the trust of the public. Anyone should be able to audit the system by themselves (if they wanted to) in order to trust it. There should be no backdoor to your mind.

I think that a BCI OS will absolutely need to be entirely open-source to be trusted and audited by the world (like #blockchain technology is), and also that it be executed locally for security, like on a smartphone-like device.

As information is easily copiable, possibilities are infinite for creating pretrained neural networks and sharing them. New ways of thinking and discoveries could spread very fast. Selecting good sources of information will become even more important.

I think that it will be primordial for software accessing your thoughts to be transparent and trusted. With rising awareness of the public on the importance of privacy, it’s not surprising to see new regulation such as #GDPR making its entry into European law.

The rise of artificial intelligence will be unavoidable. Some people would say: “why not be the machine ourselves and have us rise instead”? I think that’s the good approach to have. Lawmakers would have a strong interest in making BCI useable instead of banned.

As the rise of artificial intelligence should naturally happen in the future, humans should gear up not to be left behind. I think, like Elon Musk, that we’ll eventually need to augment our capacities to keep up the pace. It’s way better to go in that direction than to leave it all to machines. We actually prefer to be the machines – this outcome has much lower risks for the human race than to just do nothing.

It somehow reminds me of an image of the evolution of the man. There was a monkey, then a caveman, then a muscular, viking-like man, then a nerd in front of a computer, then a satellite-man : someone who’s so technologized that he is the machine. Whether we want it or not, progress is unavoidable. I think people must accept the importance of embracing change, as it is riskier to stagnate.

By the way, I think that not only it would be required that the source code of the BCI operating system be open-source, but also I think that the permission management for what will be sent to apps needs to be very clear. For example, not like that.

Would you want that to happen with each of your thoughts? I think every sane person would answer: no. Data protection is important. And making things clear, too. And making code open-source (or at least source-available) as much as possible will help. Hopefully, artificial neural networks can be downloaded locally and ran locally without using APIs, so I think that most of the BCI’s code could be ran locally, thus limiting cloud access.

One of the main point of this article is that the BCI must be open-source in order for the people to trust it. It must be by the people, for the people. This way, no huge corporation will has the rights (or possibility) to put a backdoor on your mind and abuse of your personal information. Open-source code is beautiful in a way: it allows to everyone to follow the path or progress and to grow, not just the people at the top.

Disclaimer: I’m not a leftist, I just think that some things, such as open-source, are good for everyone.

The Role of Deep Learning in the BCI

So you want to avoid the risk of humans to be surpassed by machines. How to do that? Well, to have a working BCI, the key is to master signal processing and time series processing, as this is one of the first steps to having a working BCI. So here is another main points to remember from this article:

HIGHLIGHT / TLDR: Signal processing is very important because thoughts must take place in the time domain, so signal processing must be solved before solving the rest of AI (ideally). You want to be the machine: in the end, artificial intelligence is less of a threat if it is used as a part of us as a productivity tool and as an extension of oneself.

So, other than spawning new neurons in the cloud, I expect to see lots of new productivity tools emerging from Deep Learning technologies. Deep Learning will naturally firstly be used to do the signal conversions and to transform information from medium to medium, then enabling more applications.

For example, translating what you think into interpretable thoughts, and new thoughts to something you can think of and interpret.

Deep Learning will allow converting signal to, let’s call that, unified formats, such that you could control many different remote bodies but with the same action thoughts. Bipedes, cars, planes, aquatic equipment, video game characters, and other sorts of controllable bodies.

I predict that signal processing will become increasingly important.

On the Predictions Made by Ray Kurtzweil

By the way, I think that this Wikipedia article is worth reading in depth to have a glimpse of how the future could be radically changed by the new flexibility of the minds, according to Ray Kurtzweil.

According to that article, minds will start evolving in all sorts of ways. Some artificial minds could exist in the cloud only and replicate themselves. Some other artificial minds could merge or split up. Kurtzweil predicts that the lines will blur for what regards identity as intelligent beings could become so fluid. I think his predictions are another motivator for moving forward to the BCI instead of simply doing nothing. Let’s recall that as humans, we don’t want to become obsolete in front of machines, so the BCI is a good idea to keep up the pace of progress.

The Type of Intelligence Humans Will Really Still Need.

I grew up with computers. When I was a teenager in secondary school in the PROTIC program, I was often thinking about intelligence, evolution, and access to information.

We had classes teaching us how to do good search queries on the web, and I would ponder on how our generation(in general) is often criticized. Our generation has a shorter attention span than the previous generations, more ADHD, and so on. Still, I think my generation had the opportunity to develop a special type of intelligence compared to the generations before me: for instance, I would often remember more where to find information than remembering the information itself! This way, my mind could focus more on how to manage information than on directly memorizing information, as accessing information was cheap when having access to the internet. I was constantly surfing on the web at secondary school, and most of what I was doing at school would require surfing on internet to write new texts or to summarize information on an unknown topic for my next homework. I’m glad I could surf the web this much as a teen to allow me to develop more my internet intuition. I believe that this type of intelligence must be developed at some point.

And I think that the BCI will take this trend further. Imagine spawning external neurons for a second. You’re delegating the task of knowing things about a particular topic. When connected, you seem to remember things on that topic (or at least to know how to access information on that topic), and you can access the information quickly. But when disconnected, your brain has a limit on the capacity of things it can hold, so you didn’t copy everything “locally”. Obviously, we can’t learn everything in the world without external help. For example, I often ponder that it’d take a neural question-answering system that can map-reduce the whole Wikipedia at once and retrieve relevant information, a bit like a neural Siri, but where the question is already an encoded thought vector rather than a raw textual sentence, and the response also a thought vector.

However, the nuance is that even when disconnected, your brain MUST remember how to query for that information. But not only how to query it, but also, you need to know what can be queried. So you’re basically building a semantic mental lookup table, and delegating pure memorization tasks, having access to extra neural cache.

You’re suddenly more focused on knowing what’s possible or not – what is and what’s not – rather than on knowing things in depth. Before the BCI, one would focus on not being ignorant, so he’d memorize everything. After the BCI, one would focus on not being DOUBLY ignorant, so that he just be ignorant.

The nuance is that when you’re doubly ignorant, you don’t even know what you don’t know, so you at least want to stop being doubly ignorant and you want to know what you don’t know, such that you can access that information later on when you’ll can.

I think this is already happening to some extent with the web, only, right now it’s quite costly to go search for information, and this cost will be reduced. The result should be that being ignorant will be less of a big deal, but it’ll be important to know what you don’t know and where to know it.

Parenthesis: I think every programmer already develops this type of intelligence to some extent to be able to work. Working with computers daily, we need to know where to look for documentation, and what the functions do, and knowing what’s possible and the available methods are crucial.

This is why skilled programmers can easily spot unskilled programmers because unskilled programmers won’t know how nor where to look for things, nor what’s possible and what’s not.

On the other hand, it’s also unrealistic to expect from a programmer to already know in-depth 100 frameworks, and this is why programming job descriptions often suck. Someone can realistically only master a few frameworks at a time, because things always are changing. In 3 years, all the mainstream frameworks and technologies will have changed anyway.

This is somehow why the “capacity to learn quickly” is often cited as the best quality to have as a software engineer. I’d add some nuances into that to talk about the double ignorance thing. This type of intelligence to know how to seek out for information and to meta-organize thought processes will only become more and more important.

Conclusion

We must be the machine. Be the machine. Improve the machine. Be ready. We are thinking machines.